Generative Agents

Open-source frameworks and systems that orchestrate teams of AI agents—from architecture to deployment at Microsoft scale.

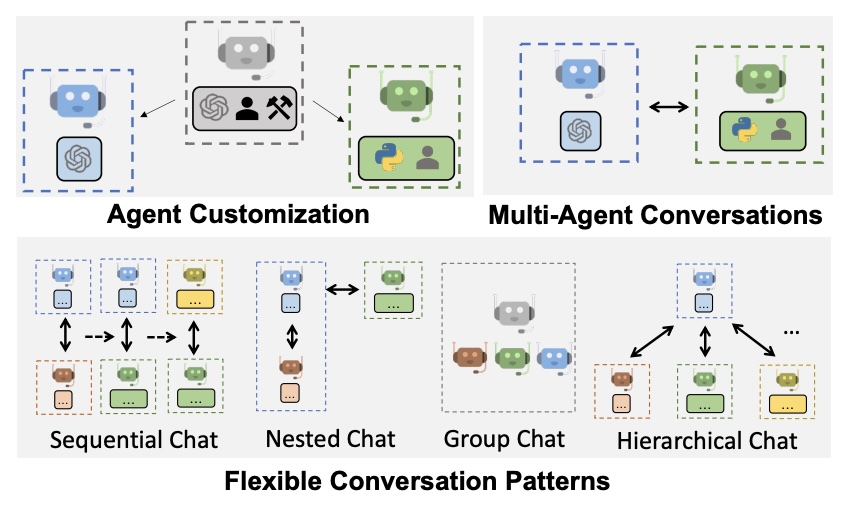

AutoGen

Co-creatorThe most widely adopted open-source multi-agent framework—50k+ GitHub stars, powering Microsoft's Agent Framework.

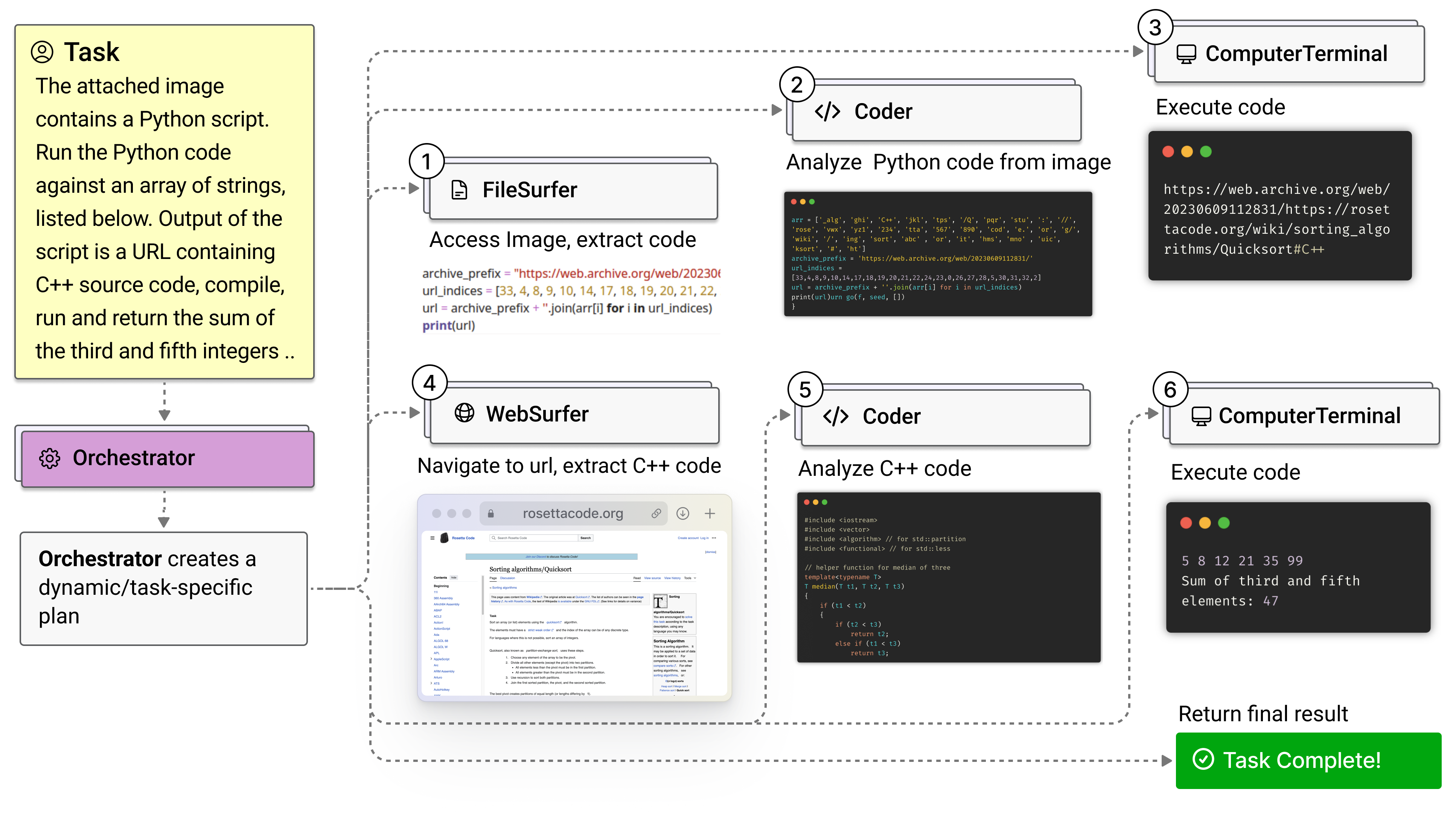

Five specialized agents orchestrated to browse, code, and reason—state-of-the-art on GAIA and WebArena.

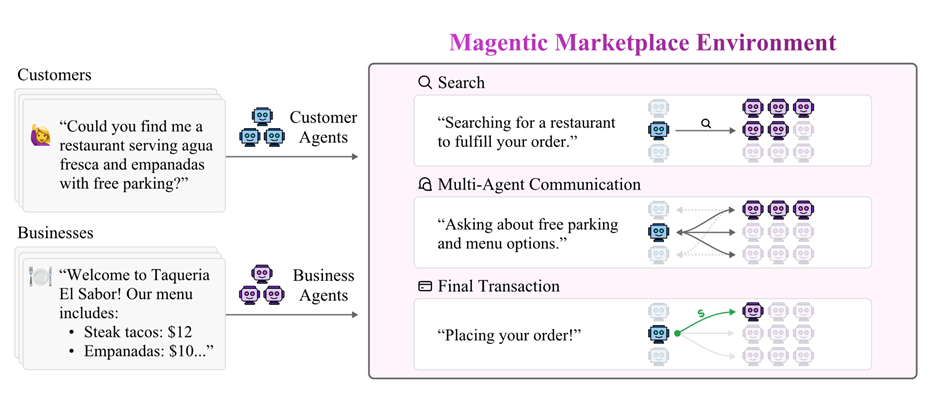

What happens when AI agents negotiate on behalf of humans? A simulation platform built with economists to find out.

Converts PDFs, DOCX, images, and 20+ formats to Markdown. 50k+ stars, 126k downloads/day.

Human-Centered GenAI

Agents fail in ways chatbots don't. I study where human-AI collaboration breaks down and build systems that fix it.

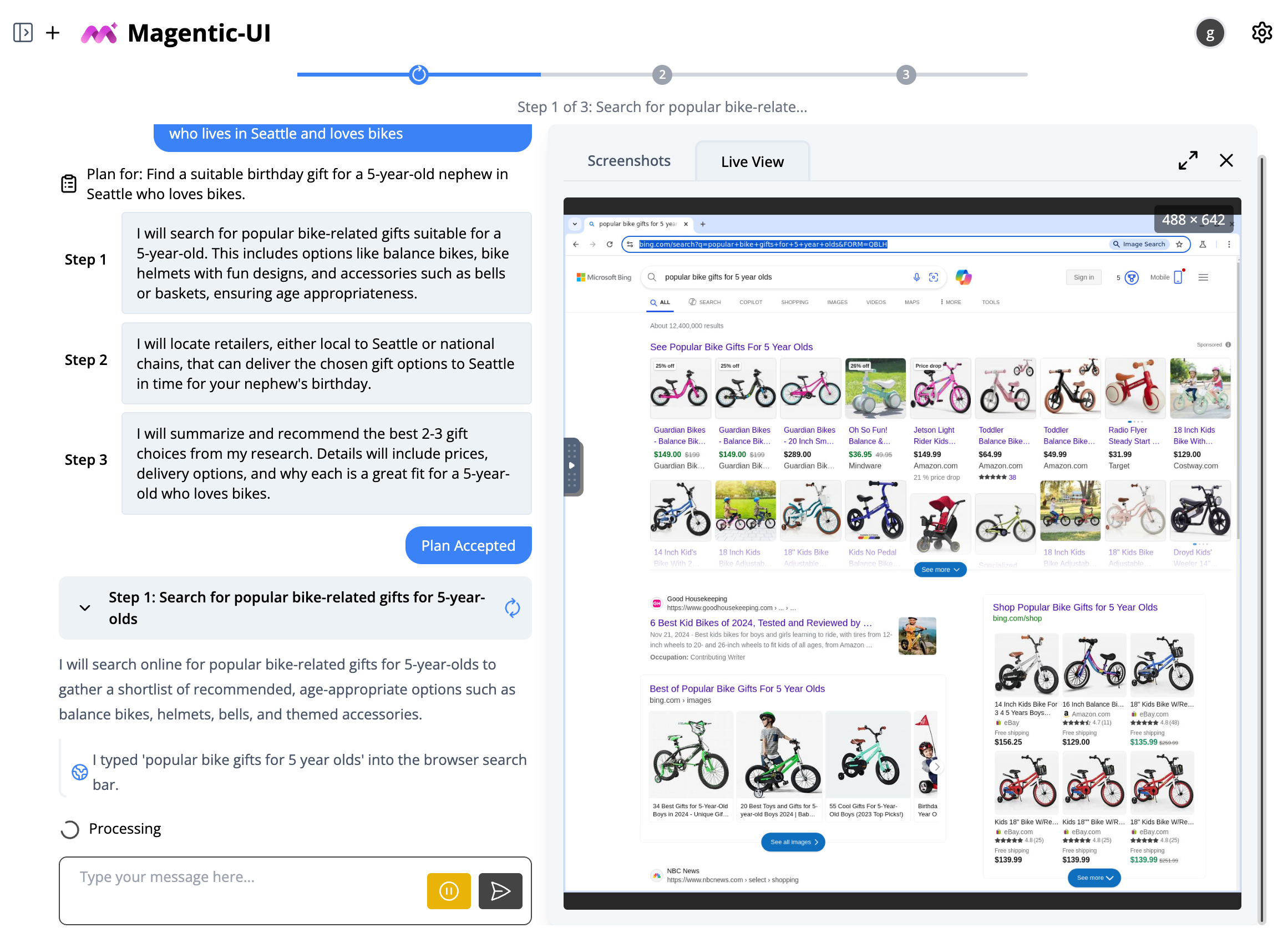

Magentic-UI

Co-leadA web agent that plans with users before acting. Co-planning, guardrails, and full transparency—not autonomy for its own sake.

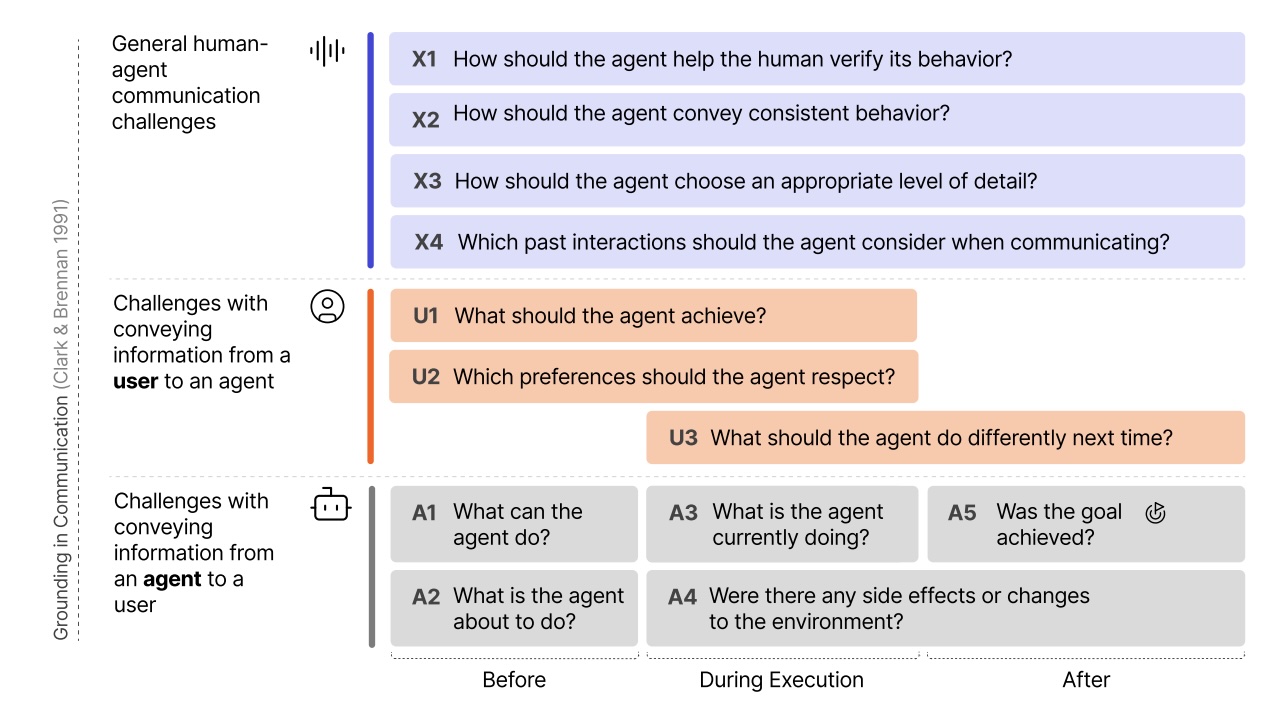

A taxonomy of 12 breakdowns in human-agent communication—from intent mismatch to transparency overload.

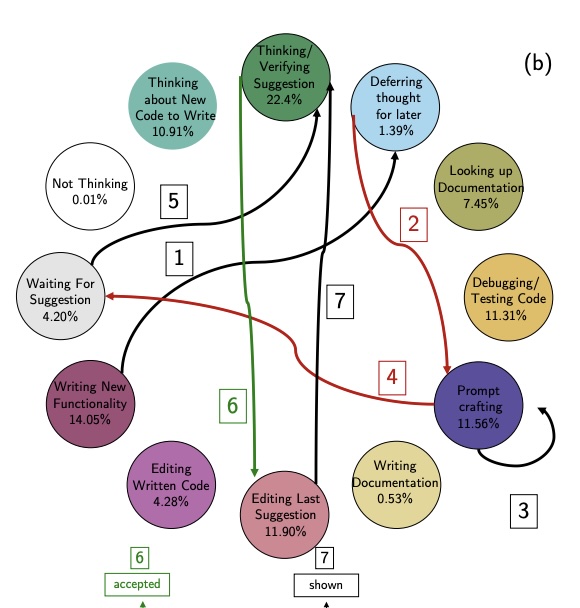

The largest observational study of Copilot usage. Main finding: programmers spend more time verifying suggestions than writing code.

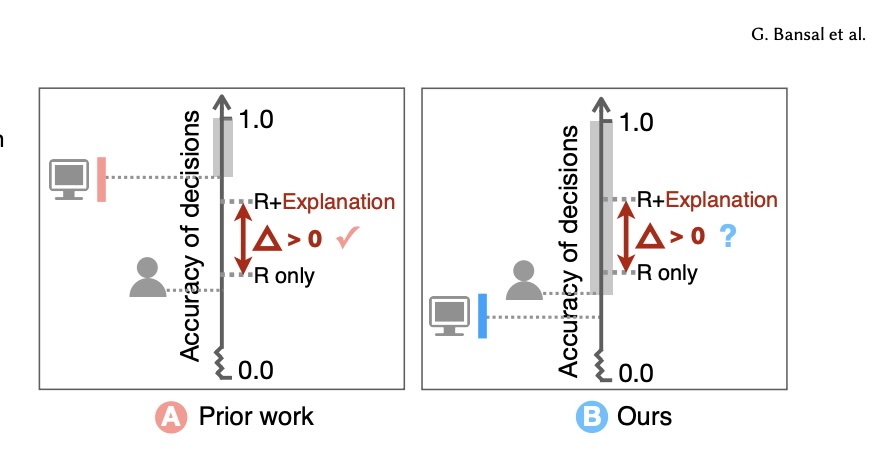

AI explanations only improve decisions when humans have enough expertise to disagree with the model.

Open Source

I love open source and programming. Most of my research ships as code you can run. Find me on GitHub. Fun fact: in December 2024, I was a top trending developer on GitHub worldwide.

Bio

Gagan Bansal is a Principal Researcher at Microsoft Research AI Frontiers, where he co-created AutoGen, one of the most widely adopted open-source frameworks for multi-agent AI systems and now the foundation of Microsoft's Agent Framework. He has co-led the development of Magentic-One, a generalist multi-agent system achieving state-of-the-art on GAIA and WebArena benchmarks; Magentic-UI, a human-in-the-loop web agent with co-planning and guardrails; and Magentic Marketplace, a collaboration with economists studying agent behavior in two-sided markets. His research spans both building agentic AI systems and studying how humans interact with them—identifying fundamental challenges in human-agent communication and examining how AI explanations, uncertainty displays, and code completion tools affect human decision-making and performance. His work has received a Best Paper award at COLM and an Honorable Mention at CHI. He holds a Ph.D. in Computer Science from the University of Washington, where he was advised by Dan Weld, and a B.Tech from IIT Delhi.